Which HDMI Cables Support HDR? HDMI 2.0 & 1.4 Explained & More

What To Know

- Only HDMI 2.0a and later versions support HDR, as they can handle the minimum 18 Gbps bandwidth required for HDR’s wider color gamut and dynamic range needs.

- Different HDR standards exist, with HDR10 being the most basic and widely supported, while HDR10+ and Dolby Vision offer dynamic metadata for enhanced scene-by-scene optimization.

- No special HDMI cable is needed for HDR; any cable that supports HDMI 2.0a or later versions can facilitate HDR, ensuring the necessary high bandwidth transfer.

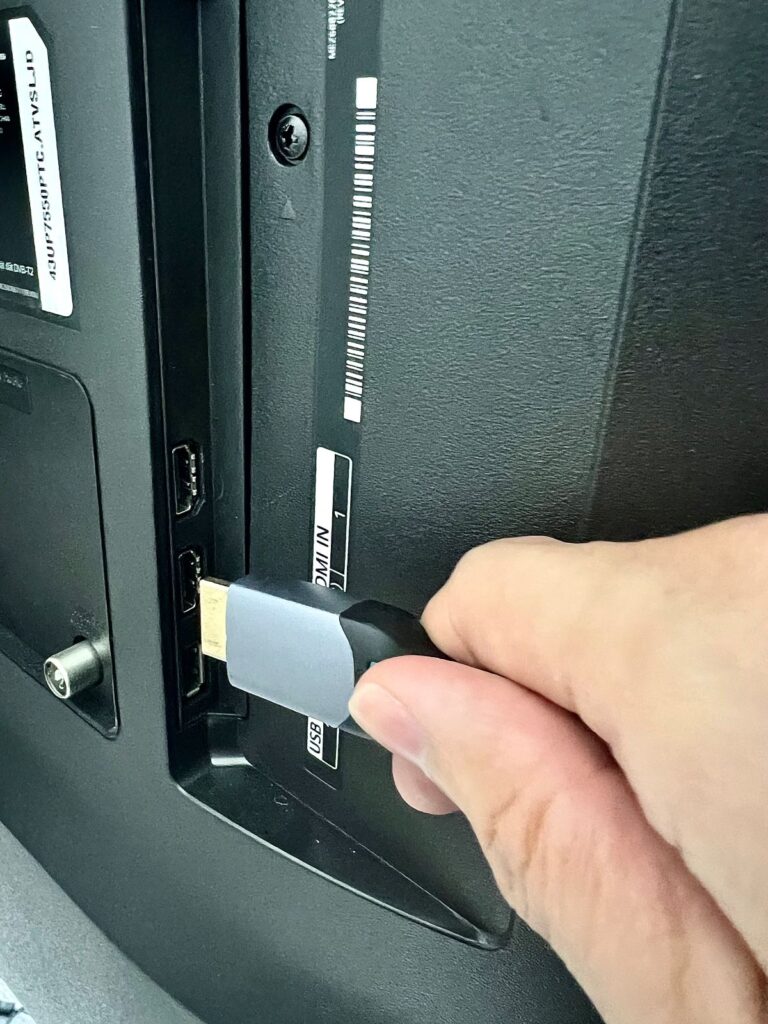

Have you just plugged in your HDMI and are puzzled about HDR compatibility?

Dive into this guide as we demystify the connection between HDMI versions and HDR support.

From the inception of HDMI 1.0 to the widely adopted HDMI 1.4 and the sophisticated HDMI 2.0, we’ll elucidate every version, ensuring you gain a thorough understanding of their distinct capabilities and advancements.

Quick Navigation

What is HDR?

HDR (high dynamic range) is a display feature that contains information about color and brightness across a much broader spectrum.

“Dynamic range” denotes the ratio comparing a given range’s highest and lowest values. “High dynamic range” indicates being able to reproduce or record a significant portion of the high-low spectrum.

Contrary to general assumptions, HDR is not 4K-specific. It can be paired with a lesser-resolution display too. For HDR to do its job, it requires two things: an HDR-capable display (TV, monitor, etc.) and native HDR content. You’ll need a Blu-ray player to read the HDR-encoded information if the source material is a physical disc.

HDR preserves increased details in a scene’s highlights and shadows, resulting in richer colors, increased image depth, and enhanced contrast. All these enhancements offer a more visually striking and immersive viewing experience.

Pull up an image or scene with a starry night background to check how well HDR works on a TV. Excellent HDR implementation will showcase the contrast between the night sky’s deep blacks and its sprinkled stars sparkling distinctly.

In a non-HDR setting, the stars will not stand out or fade in the blackness of the sky.

Do All HDMI Versions Support HDR?

No, not all HDMI versions support HDR. Only HDMI 2.0 and versions released after that support HDR officially, as they can do the minimum 18 Gbps bandwidth HDR requires.

HDMI versions preceding v2.0a do not boast the specifications and features needed to manage HDR’s wider color gamut, increased color depth, greater dynamic range data needs, etc.

They lack the signaling capabilities and bandwidth to move HDR information between compatible machines.

It’s worth noting that new features and functions cannot be added to existing HDMI protocols and hardware. That is why HDMI releases new versions periodically to stay up to date or embrace evolving technologies.

| Standard | Max Resolution & Refresh Rate | Bandwidth | Supports HDR |

| HDMI 1.0 | 1080p @ 60 Hz | 4.95 Gbps | No |

| HDMI 1.1 | 1080p @ 60 Hz | 4.95 Gbps | No |

| HDMI 1.2 | 1440p @ 30 Hz | 4.95 Gbps | No |

| HDMI 1.2a | 1440p @ 30 Hz | 4.95 Gbps | No |

| HDMI 1.3 | 5K @ 30 Hz (1) | 10.2 Gbps | No |

| HDMI 1.4 | 5K @ 30 Hz (1) | 10.2 Gbps | No |

| HDMI 1.4a | 5K @ 30 Hz (1) | 10.2 Gbps | No |

| HDMI 1.4b | 5K @ 30 Hz (1) | 10.2 Gbps | No |

| HDMI 2.0 | 8K @ 30 Hz (1) | 18.0 Gbps | No |

| HDMI 2.0a | 8K @ 30 Hz (1) | 18.0 Gbps | Yes |

| HDMI 2.0b | 8K @ 30 Hz (1) | 18.0 Gbps | Yes |

| HDMI 2.1 | 10K @ 120 Hz (2) | 48.0 Gbps | Yes |

| HDMI 2.1a | 10K @ 120 Hz (2) | 48.0 Gbps | Yes |

(1): Possible by using Y′CBCR with 4:2:0 subsampling;

(2): Possible by using Display Stream Compression (DSC)

Notes:

- HDMI 2.0a supports HDR10, a popular HDR format.

- HDMI 2.0b supported HLG (hybrid log-gamma), a broadcasting-friendly HDR format.

- HDMI 2.1 supports multiple HDR formats, such as HDR10, HDR10+, HLG, and Dolby Vision. It introduced Dynamic HDR, which facilitated frame-by-frame and scene-by-scene HDR optimization.

Different HDR Standards

HDR is not a monolith. It has different formats for video production, namely HDR10, HDR10+, and Dolby Vision. These HDR formats have specific additional requirements and specifications, but their goal is to serve HDR’s best to the viewers.

| HDR10 | HDR10+ | Dolby Vision | |

| Founding year | 2015 | 2017 | 2014 |

| Supporting brands | Acer, ASUS, Dell, LG, Panasonic, Philips, Samsung, Sony, Vizio | Samsung, Panasonic, Amazon | LG, Philips, Sony, TCL, Vizio |

| Bit depth/color | 10-bit | 10-bit | 12-bit |

| Metadata | Static | Dynamic | Dynamic |

| Peak brightness | 10,000 | 10,000 | 10,000 |

| Nature/ownership | Open | Open | Closed/proprietary |

| Cost | No royalty or other fees | No royalty, but admin fees apply | Both royalty and yearly charges apply |

Notes:

- Although HDR10/HDR10+ can have a peak brightness of 10,000 nits on paper, most HDR10/10+ content is mastered with a 1,000 to 4,000 brightness range. Dolby Vision contents are usually mastered at a higher peak brightness higher, but still not coming close to the 10,000 nits threshold. That level of illumination is reserved for prototypes and testing.

- Unlike HD-DVD and Blu-ray, the different HDR formats are not mutually exclusive. In other words, a single Blu-ray disc could contain Dolby Vision, HDR10+, and HDR10 metadata. In the real world, however, such comprehensiveness is not visible.

- The three streaming devices capable of managing all the three HDR formats are the Fire TV Stick 4K , Fire TV Cube , and Chromecast with Google TV . Not all Apple TV 4K generations support HDR10+. They usually support only Dolby Vision and HDR10.

HDR10

HDR10 is the most popular HDR standard but also the most basic. It is open, meaning any content distributor or producer can use the format freely.

The open HDR format employs static data, meaning certain brightness levels are maintained across the content.

While dynamic metadata alters those levels depending on each frame or scene.

The Consumer Technology Association (CTA) first announced HDR10 in August 2015. A wide array of TVs and other display devices support HDR10, which includes TV and monitor brands such as LG, Samsung, Dell, Sony, Vizio, VU, Sharp, etc.

HDR10 is supported and embraced by several well-known brands since it’s open standard or royalty-free and the first of its kind. The “open” nature of the format means TV manufacturers will not have to pay anything extra to incorporate HDR10 in their products.

PS4 and PS5 only support HDR10, whereas the latest Xbox One consoles support HDR10 and HDR10+. The Series X and One also support Dolby Vision.

HDR10+

As the name suggests, HDR10+ (or HDR10 Plus) is an improvement over HDR10.

The format appends a dynamic metadata layer over HDR10 source files, which helps adjust each scene’s brightness and tone on the go. The metadata preserves more details between very dark or bright scenes.

Like HDR10, HDR10+ is also open or royalty-free. It was created to compete with Dolby Vision or serve as an alternative. Founded by Samsung, 20th Century Fox and Panasonic, major hardware players and big Hollywood film companies back the format.

20th Century Studios is a Disney subsidiary, meaning an extensive library of HDR10+ content is readily available. Other movie studios to join 20th Century as HDR10+ content partners include Universal Pictures and Warner Bros.

Prime Video, Hulu, Paramount+, and YouTube support HDR10+ on the online video streaming services front. As per Samsung, more than 3,100 televisions and projectors from over 20 brands and 120 partners support the HDR10+ standard.

Although HDR10+ is royalty-free, adopters (source providers, display manufacturers, etc.) are expected to pay HDR10+ Technologies a logo certification and licensing program fee ranging from $2,500 to $10,000. For a complete list of who must pay, visit this page.

Dolby Vision

Dolby Vision is Dolby’s proprietary HDR version. It was made official in 2014, a year before HDR10. But it’s relatively niche due to the costs associated with it.

To use the standard or render a TV Dolby Vision-certified, manufacturers must pay Dolby a $2,500 license fee yearly and a royalty of one to three dollars on every unit sold.

Dolby Vision is the only HDR format to support 12-bit color. The two formats mentioned above are designed for only 10-bit colors. (More about the two color depths later.)

It’s worth mentioning that Dolby came up with Dolby Vision IQ, a Dolby HDR extension that helps render Dolby Vision content according to the ambient light.

In other words, Vision IQ is an adaptive, intelligent HDR TV technology that alters the brightness, contrast, and overall visual performance based on how dark or bright the living room is.

In 2021, HDR10+ came up with something similar, called HDR10+ Adaptive. It carries out the same role as Dolby Vision IQ but for HDR10+ only. It can be found on select Samsung and Panasonic TVs, such as Samsung’s Neo QLED sets.

Adaptive shows the efforts and intentions of HDR10+ to match DV toe-to-toe and make it difficult for Dolby to justify the licensing fee and royalty it charges for its HDR.

HLG and Advanced HDR

Despite not being mainstream, HLG (hybrid log-gamma) and Advanced HDR are other HDR formats worth mentioning.

BBC and NHK (a Japanese broadcaster) developed HLG for live broadcasts. It is not necessarily intended for local media playback or streaming. HLG is static HDR and compatible with DV and HDR10 displays.

HLG HDR makes broadcast TV signals HDR-compatible without additional bandwidth demands, significantly reducing the broadcast’s complexity and cost.

HLG is royalty-free and doesn’t employ metadata to instruct the television about displaying HDR content; as metadata can get lost, causing colors to show incorrectly or lead to other issues.

HLG uses SDR signal’s gamma curve, adding a logarithmic curve with added brightness atop the signal; therefore, “log” and “gamma” are in the name.

Because HLG doesn’t use metadata, it cannot dynamically alter hues based on its environment. HLG is excellent at making visuals more vivid and brighter. However, it isn’t as adept at handling low light as other HDR formats.

Advanced HDR is courtesy of Technicolor, a film production company. AHDR employs dynamic metadata and supports 12-bit colors. It leverages Technicolor’s several decades of movie-making excellence and Philips’ extensive experience in high-quality TVs and video standards.

Advanced HDR is compatible with cable, satellite, ATSC 3.0, OTT broadcast, and other distribution platforms or content mixes.

AHDR has been used to broadcast MLB games in association with Time Warner Cable and the 2016 Summer Olympics for NBCUniversal.

Both HLG and AHDR have to go far on the content front to come anywhere near competing with the three primary HDR formats.

Bonus: Color: 10-bit vs. 12-bit

Color bit depth denotes how much data TVs can employ to instruct a pixel about the color to display. A TV with a higher color depth exhibits more hues and decreases banding in scenes with similar color shades.

10-bit and 12-bit color depths denote the number of shades or hues a display can render. More bits mean a more extensive color spectrum and true-to-life visuals.

The two are HDR color bits. On the other hand, SDR content is paired with 8-bit displays, which have 16.7 million colors. Here is a brief comparison between 10- and 12-bit colors.

| 10-bit | 12-bit | |

| RGB Shades | 1,024 shades | 4,096 shades |

| Total Colors | 1,07 billion | 68 billion |

| Usage in HDR | Current standard | Future-proof |

12-bit is a color depth with four times more shades than 10-bit, meaning more realistic images. Despite packing in fewer colors, 10-bit remains relevant since most HDR standards max out at 10-bit.

Although Dolby Vision supports 12-bit, you may not be able to experience it in the real world as the equipment needed to support 12-bit color depth may not always be readily available. For instance, the TV display itself may not be capable of delivering 12-bit colors.

Also, 12-bit color depth’s advantages are more evident in high-quality content production workflows or professional-grade displays. Most content mastered and delivered currently is 10-bit.

Although 12-bit is the future, whether it becomes the norm or supplants 10-bit ultimately depends on how accessible Dolby Vision becomes or whether other HDR standards adopt 12-bit color—the chances of either taking place are improbable.

Is a Special HDMI Cable Required for HDR?

No, there’s no special HDMI cable needed for HDR. If the cable supports HDMI 2.0a and later versions, it can do HDR.

HDMI cables sold as “HDR-compatible” essentially support the HDMI version that can facilitate HDR. In other words, those cables can do the high bandwidth transfer necessary for HDR, among other things (more on them later).

If you’d like to buy cables that support HDR, look at the Belkin HDMI Cable , Maxonar HDMI Cable , or UGREEN HDMI Cable .

What are the Requirements for HDR?

Here are a few things needed for HDR.

- Bandwidth: The data flow to stream HDR content must be at least 18 Gbps. Even though HDMI 2.0 doesn’t officially support HDR, its 18 Gbps data transfer rates should be good enough in most cases. The 18 Gbps bandwidth is adequate to move video, audio, text, picture tone, and brightness information.

- 10-bit color: 12-bit is ideal for HDR but not essential. 10-bit is adequate and represents the minimum color depth the panel must have to showcase HDR content with integrity or show the contrast between the darkest and lightest colors.

- HDR-capable display: The TV, projector, or monitor display must be designed or equipped to leverage and reproduce HDR’s wide color gamut, widened dynamic range, boosted brightness levels, etc.

- HDR-capable software/source device: The gaming console, DVD player, streaming service, graphics card, etc., must be capable of generating or processing HDR content to play them on HDR-compatible displays and ensure the enhanced color gamut and dynamic range are preserved and showcased properly. 4K televisions and Blu-ray players must carry the necessary firmware to manage HDR.

- Premium High-Speed or Ultra High-Speed HDMI Cable: No “special” HDR cable exists. But as newer HDMI versions are released, the HDMI forum introduces new HDMI cables to ably support the latest standard. Since HDR support begins with HDMI 2.0a, the cables complementing 2.0a or later standards are best suited for HDR—in other words, Premium High Speed or Ultra-High Speed HDMI cables.

FAQs

Can I Watch Dolby Vision Movies on a TV That Supports HDR10 or HDR10+?

Yes, Dolby Vision content can be played on a HDR10/HDR10+ screen.

You won’t get any error message saying content is incompatible with the display or anything along those lines.

However, the Dolby Vision movie will not play in full glory or will be downgraded to the HDR10/HDR10+ display standard.

Dolby Vision content usually has HDR10 as its underlayer, so Dolby Vision content can be played on devices that don’t support the Dolby standard. But if DV content doesn’t use HDR10 as its base, the video will get outputted in SDR.

Can I Watch HDR10 or HDR10+ Movies on a TV That Supports Dolby Vision?

Yes, you can watch HDR10 movies on a Dolby Vision-compatible TV panel. The cross-compatibility between HDR10 and Dolby Vision is generally seamless.

However, things may get tricky with HDR10+ and DV since the two compete directly against each other and may not be supportive.

Note: Dolby Vision is the superior of all three HDR standards. HDR10+ comes close to DV but is not on the same level.

Most TVs usually are either Dolby Vision or HDR10+. It’s, however, still safe to believe the two aren’t mutually exclusive. Read your TV manual to learn more.

Conclusion

All HDMI versions do not support HDR. The HDMI version must be 2.0/2.0a at least to be compatible with HDR. Because there are different HDR versions, certain HDR types may require the more current HDMI standard to work.

Also, for HDR to work, all connected devices must support the feature. HDR won’t work optimally if the source device does, but the display doesn’t.

Moreover, the specific HDR formats supported could vary across devices despite having identical HDMI version support.

Currently, HDR10 and HDR10+ are more the standards due to their cost-efficiency. On the other hand, Dolby Vision is the premium option and is generally associated with premium TVs.

Catherine Tramell has been covering technology as a freelance writer for over a decade. She has been writing for Pointer Clicker for over a year, further expanding her expertise as a tech columnist. Catherine likes spending time with her family and friends and her pastimes are reading books and news articles.