How to Choose the Best TV HDMI Port for Gaming

- HDMI 2.1 ports are essential for high-performance gaming, offering features like 4K at 120 Hz, VRR, and ALLM, which are not available in HDMI 1.4 or 2.0.

- Different HDMI ports offer varied capabilities; for example, HDMI 2.0 supports 4K at 60 Hz, while HDMI 2.1 supports up to 4K at 120 Hz and advanced gaming features.

- Identify the HDMI port version on your TV that suits your needs (e.g., HDMI 2.1 for high-end gaming) and use the appropriate cable to ensure compatibility and optimal performance.

Choosing the right HDMI port on your TV can make a huge difference, especially for gaming.

This guide will simplify which HDMI port to use for the best gaming experience, ensuring you get the most out of your playtime.

Quick Navigation

Does It Matter Which HDMI Port I Use on My TV? (Especially for Gaming)

Yes, the HDMI port you use on your TV does matter.

If you have high-performance streaming requirements or want to benefit from features unique to a particular HDMI version, you must be selective about the HDMI port you use on your television.

HDMI 2.1 is the latest from the HDMI Forum, and it is a significant improvement over the previous versions, including its immediate predecessor.

It packs advanced features such as 8K resolution streaming at a relatively high frame rate, automatic mode switching, etc.

The HDMI 1.4 port or even the HDMI 2.0 socket on your television does not have the bandwidth to realize 4K at 120 Hz. We’ll walk through their capabilities more later in the article.

VRR, ALLM, and eARC are Unique to HDMI 2.1

Besides offering industry-leading bandwidth or data transfer speeds, HDMI 2.1 also introduces new features and considerably improves upon what was already available.

For gamers, in particular, HDMI 2.1 offers features such as ALLM (automatic low-latency mode) and VRR (variable refresh rate).

Like AMD FreeSync or Nvidia G-Sync, VRR lets a gaming console output visuals at varying frame rates, allowing the console to adjust dynamically for improved performance.

ALLM makes your TV gaming-ready automatically. The two-way signal passage of HDMI’s bidirectional data flow triggers the television’s mode-switching functionality.

As a result, your TV switches to gaming mode each time you launch a game without any effort from your side.

The eARC (enhanced audio return channel) function caters to the casual user. It allows playing TV audio through an external speaker, such as a soundbar.

HDMI 2.0 or previous standards do not support any of the above features.

Do Different HDMI Ports Make a Difference?

Yes, HDMI ports do make a difference, primarily concerning data transfer speeds and the features specific to certain HDMI versions you benefit from.

For example, if the HDMI port on your TV is v2.1, you may connect your PlayStation 5 to the television and play 4K games at 120 Hz.

If the port were HDMI 2.0 instead, you wouldn’t be able to do 4K gaming at 120 Hz. the refresh rate would drop to 60 Hz.

To boost the frame rate to 120 Hz, you would have to compromise on video resolution and drop it to 2K (1440p) resolution.

The HDMI signal shall still pass through, though, as there’s backward compatibility on board.

How Do I Know Which HDMI Port to Use?

Since HDMI is backward-compatible, you may plug an HDMI cable into any HDMI port on your TV or monitor. The video and audio transmission shall work fine each time.

Generally, HDMI 2.0 is par for the course for most users and usage scenarios. But if you need all the advanced and best features and the highest bandwidth HDMI offers, look for an HDMI 2.1 port on your TV.

For instance, if you’d like to game at 4K resolution and the 120 Hz frame rate, you will have to plug your console into the HDMI 2.1 port on your TV.

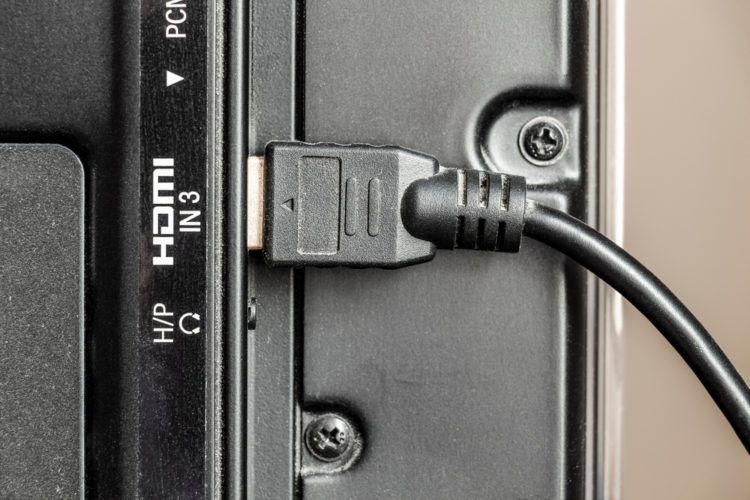

HDMI Port Labels

Identifying the HDMI ports on a TV shouldn’t be a problem since those sockets contain tiny labels indicating the HDMI standard they support.

And if those visual cues aren’t there, you can always check the TV’s user manual.

At times, the labels could use adjectives (good, better, and best) to denote the port’s standard instead.

The term “good” usually indicates HDMI 1.4. The adjectives “better” and “best” signify HDMI 2.0 and 2.1, respectively.

The adjectives tagging along make things less complicated or layperson-friendly for those who do not truly understand what the various HDMI versions signify.

Other TVs may label the ports with the names of the devices that connect to the device.

For instance, if there are four ports, they could be marked as “DVD,” “GAME,” “STB.” STB denotes “set-top box.”

You may plug your cable TV box into the “GAME” or “DVD” port since HDMI doesn’t discriminate that way.

You Need the Right Cables

Once you’ve ascertained the correct HDMI port, check the cables you use to meet the port’s data transmission requirements.

For example, you’ll need an ultra-high speed HDMI cable to meet HDMI 2.1 standards or deliver data at the maximum 48 Gbps transfer speeds.

A premium high-speed or just high-speed HDMI cable would work fine, but it may not be able to reap the benefits of HDMI 2.1 the way a suitable cable would do.

If you don’t have an ultra-high-speed cable lying around, here are some excellent cables for your consideration:

- Keymox 8K HDMI 2.1 Cable

- CableCreation 8K 48 Gbps Ultra High-Speed HDMI Cable

- Monoprice 8K Certified Braided Ultra High Speed HDMI 2.1 Cable

- Anker 8K @ 60 Hz Ultra High Speed HDMI Cable

Since the focus is on cables for traditional output devices such as TVs, the cords mentioned above come with the standard-size or Type A HDMI connector. As a result, there shouldn’t be any confusion.

Does It Matter Which HDMI Port I Use for 4K?

Yes, as mentioned earlier, the HDMI port you use on your TV or any other device significantly impacts your 4K movie-watching or gaming experience.

However, how distinctively different the experience is varies based on the HDMI standards themselves.

HDMI 2.0 supports 4K streaming, and so does HDMI 1.4. But the two handle the resolution differently.

Here is an overview of how HDMI ports supporting different versions handle 4K:

- HDMI 1.4 supports 4K video at 30 Hz since its maximum bandwidth is capped at 10. Gbps.

- HDMI 2.0’s ability to transfer data at 18 Gbps speeds helps it stream 4K content at up to 60 Hz. Existing 4K TVs sport the standard.

- HDMI 2.0’s updated versions, 2.0a, and 2.0b, match v2.0’s bandwidth numbers and do not offer anything revolutionarily new. They, however, usher in improvements on the HDR (high dynamic range) front.

- With a maximum bandwidth of up to 48 Gbps, HDMI 2.1 does 4K at 120 Hz refresh and 8K at 60 Hz. As discussed above, the increased bandwidth allows for new features such as eARC, ALLM, VRR, etc.

HDMI 1.0 is now pretty much obsolete, and TVs have been made with HDMI 1.4 ports for years. If your TV is not a relic of those good old days, it’s almost sure to have an HDMI 1.4 port, if not greater.

Conclusion

An HDMI port has become a ubiquitous audio/video interface or an integral aspect of most consumer electronic devices due to its flexibility, convenience, and straightforward approach.

However, like everything that gets better with time, HDMI ports have also been at the receiving end of continual improvements and upgrades. As a shrewd user, you must know what those enhancements are and how to use them to boost your user experience.

Using an HDMI 2.0 port on your TV when you could have very well used the adjacent 2.1 port is not just a wasted opportunity but also demonstrates you just don’t know better.

Ignorance could be bliss at times, but it’s certainly not the case when it comes to using HDMI on your devices.

Hopefully, after having read this piece and learned how different HDMI 1.4, 2.0, and 2.1 standards are from each other in their capabilities, you would use the right HDMI port on your TV or other devices going forward.

Catherine Tramell has been covering technology as a freelance writer for over a decade. She has been writing for Pointer Clicker for over a year, further expanding her expertise as a tech columnist. Catherine likes spending time with her family and friends and her pastimes are reading books and news articles.