HDR 8-Bit vs 10-Bit: Differences in HDR Color Depth

What To Know

- 8-bit color depth produces 16.7 million colors, while 10-bit achieves over 1 billion colors, offering richer color and shade variations in images and videos.

- Despite the significant difference in color options, the average human eye often cannot discern between 8-bit and 10-bit, although the difference is noticeable on high-quality panels.

- True HDR is inherently 10-bit, offering a more detailed and dynamic color range, but 8-bit panels may still be marketed as HDR if they meet certain brightness and contrast standards.

In this article, I’ll quickly guide you through the intriguing world of HDR color depth, focusing on the key differences between HDR 8bit and 10bit.

Let’s discover the essence of color depth in displays and how it elevates your viewing with HDR technology.

Quick Navigation

What’s 8-Bit? What’s 10-Bit?

The terms “8-bit” and “10-bit” represent an image’s color depth—the greater the number of bits, the better the color and shades in a picture.

To be specific, 8-bit means a TV or display can make 256 shades of RGB (red, green, and blue) colors. The variations put together amount to 16,777, 216 colors (256x256x256).

10-bit makes 1,024 variants of red, blue, and yellow. Compute them all, and the total is 1,073,741,824 color options.

And there is also 12-bit, taking the possible color option figures even higher.

Of the three, 8-bit is the most common. It’s all around us. The content on TVs, DVDs, Blu-rays, smartphones, and everything you view online is 8-bit.

Producers continue to make 10-bit content (because it offers a lot more information to play with at the editing table), but you’re unlikely to have watched them on a 10-bit panel.

How different are 8-bit and 10-bit from each other in the real world? Not much. The average human eye cannot discern between the two.

But if you look closely or use a high-quality panel, the differences between the two standards are hard not to notice.

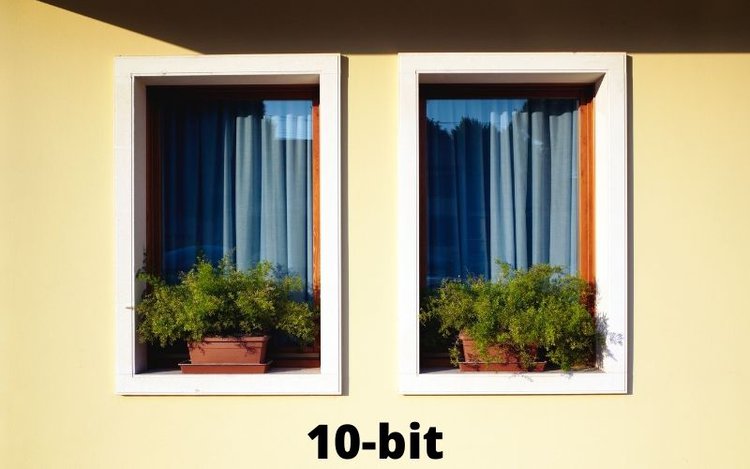

Are There 8-Bit and 10-Bit Images?

Bit depth denotes the total number of colors stored in an image, whether video footage or a still picture.

An 8-bit picture is basically shades of red, blue, and green in 28 configurations.

Similarly, 10-bit images exist. But like their video counterparts, the boost in color information isn’t easily discernible at a glance.

The 10-bit upgrade is more challenging to perceive in images than in the moving frames.

Is HDR 8-Bit or 10-Bit?

As promised earlier, here is your quick intro to HDR.

HDR is a display-related tech that improves video signals, altering or boosting the colors and luminance in images and videos.

More detailed and darker shadows and clear and brighter highlights are some of the hallmarks of true HDR.

Contrary to general perception, HDR doesn’t signify a display’s intrinsic traits (contrast, brightness, and color functions) or is not built into the hardware.

The display lays the groundwork for HDR to shine. Because HDR is so dependent on actual display quality, HDR performance is not identical across TVs and external monitors.

Now, to answer the original question, HDR is 10-bit.

Some may claim 8-bit is also HDR, particularly TV and monitor manufacturers, which is incorrect. Do not let them mislead you.

There are different HDR formats: HDR10, Dolby Vision, HDR10+, HLG10, etc. These formats support or necessitate a 10-bit (or higher) color depth.

Dolby Vision, for instance, supports 12-bit hues alongside a few other HDR variants such as HDR10+.

However, that doesn’t imply that these HDR formats require a 10-bit display. Several 8-bit display smartphones support HDR10+.

Also, there are quite a few 8-bit DisplayHDR-certified monitors.

Can HDR Be 8-Bit?

As mentioned above, HDR is 10-bit, or for HDR to truly live up to its billing, you must pair it with a 10-bit display.

But the discussion doesn’t end here.

Since 8-bit is short on colors compared to 10-bit, it cannot accurately produce all the hues needed to display HDR colors.

In technical speak, 8-bit lacks the wide color gamut essential for HDR.

Then there’s also the “color banding” (inaccurate color representation) issue with 8-bit that a 10-bit or 12-bit reproduction can address.

There can be banding when displaying natural gradients such as clear skies, sunsets, and dawns.

Increasing the bits for each color channel helps alleviate the issue, if not eliminate it.

Dithering also helps address the banding problems on displays with limited color palettes.

Why is banding a concern with HDR? Because HDR offers an increased dynamic range.

The richer HDR contents require greater bit depth to function optimally, like avoiding banding.

When HDR is paired with an 8-bit panel, it tries to emulate a 10-bit effect, similar to how a 4K panel tries to upscale or make 1080p content look as close to 4K as possible.

So, is 8-bit not HDR?

As defined earlier, HDR is more than just color. It also encompasses contrast and brightness. 8-bit and 10-bit, on the other hand, denote color depth only.

A TV with an 8-bit panel gets marketed as an HDR device because it may meet the brightness (usually in the 1,000 to 4,000 nits range) and contrast level requirements to deem the device worthy of an HDR tag.

In other words, if a TV meets HDR brightness requirements, it’s not technically wrong to label it as HDR.

But it’s still not full-fledged HDR as the color aspect goes ignored.

Conclusion

HDR has no rigid definition or standard; and, therefore, the confusion surrounding what it constitutes and what it doesn’t.

And because there are no proper regulations, it’s not uncommon for companies to market their TVs and monitors as HDR-capable even when they are not.

If you’re upgrading to a new TV or monitor and want the new device to have accurate HDR, make sure the panel is 10-bit. Do not fall prey to deceiving marketing.

But also be wary that most displays are native 8-bit. If you’re okay with that, go ahead, as there won’t be any significant pushback in the overall viewing experience.

Catherine Tramell has been covering technology as a freelance writer for over a decade. She has been writing for Pointer Clicker for over a year, further expanding her expertise as a tech columnist. Catherine likes spending time with her family and friends and her pastimes are reading books and news articles.